Own Your Intelligence: The Executive’s Guide to Local LLMs

In the current tech landscape , the pendulum is swinging back from “everything in the cloud” to “sovereign local intelligence.” For a CEO or CTO, the motivation isn’t just a hobbyist’s curiosity; it’s about de-risking your AI strategy. Relying solely on external APIs means your proprietary data, board papers, and trade secrets are being sent to third parties. By hosting your own Large Language Model (LLM), you transform AI from a rented service into a private utility.

The Strategic Why: Beyond the Hype

Before diving into the “how,” let’s look at why leadership is prioritizing local deployments:

- Data Sovereignty: Your prompts and organizational knowledge never leave your infrastructure.

- Predictable Costs: You trade unpredictable monthly API tokens for a one-time hardware investment.

- Offline Capability: Your internal copilots and automation tools keep working even if the internet (or the AI vendor) goes down.

- Workflow Integration: You can bake AI directly into your Python scripts and internal tools with zero latency.

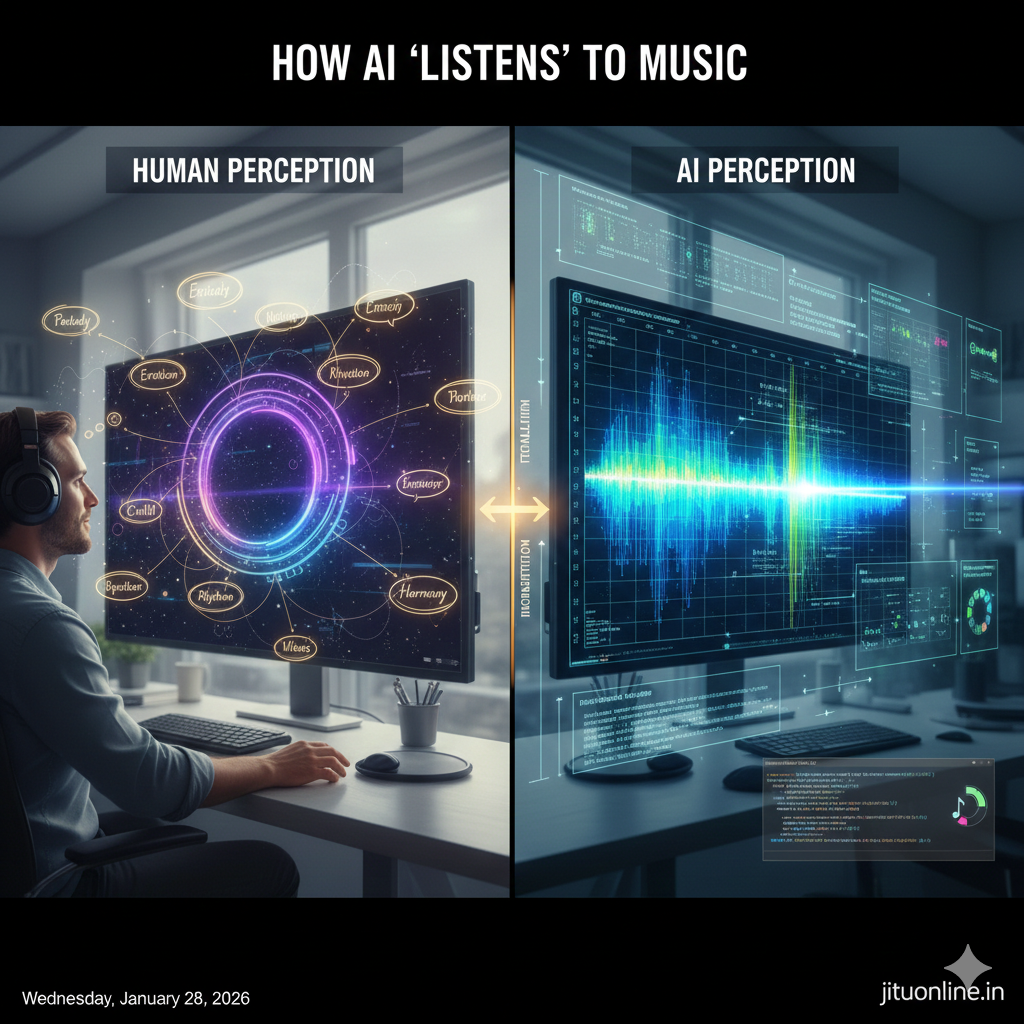

Understanding the Architecture

A local LLM setup is essentially a stack. To communicate this to your team, think of it in four layers:

- The Hardware: The physical “grunt” (GPU/RAM).

- The Runtime (Host): The software engine that manages the model (e.g., Ollama).

- The Model: The specific “brain” you download (e.g., Llama 3, Mistral).

- The Interface: How you talk to it (Terminal, Web UI, or Python API).

Hardware: The Real Success Factor

The biggest mistake managers make is assuming any laptop can run any model. Performance is dictated by VRAM (Video RAM) and Quantization.

What is Quantization? It’s a technique that compresses a model’s weights. A “Quantized” model uses significantly less memory while retaining roughly 95% of the original intelligence.

Sizing Guide for Decision Makers

| Tier | Typical Specs | Best Use Case |

| Minimum | 8GB RAM, CPU-only | Proof of concept; very slow. |

| Comfortable | 16GB RAM, Apple Silicon (M2/M3) or NVIDIA RTX 3060 | Daily drafting, summarization, and coding help. |

| Power User | 32GB+ RAM, NVIDIA RTX 4090 (24GB VRAM) | Fast, complex reasoning; handling large documents. |

| Enterprise | Multi-GPU Workstation (64GB+ VRAM) | Running the largest models (70B+) at peak speed. |

Setup Guide: Choosing Your Path

There are two primary ways to get your organization started.

Path A: Ollama (Best for Developers & Automation)

Ollama is a lightweight, command-line driven tool. It is the “Docker” of the AI world.

- Install: Download from Ollama.com.

- Run: Open your terminal and type

ollama run llama3. - Integrate: It automatically creates an API on port

11434.

Path B: LM Studio (Best for Managers & Analysts)

If you prefer a visual interface (GUI) similar to ChatGPT, LM Studio is the gold standard. It allows you to search for models visually and monitor your hardware usage in real-time.

Implementation: The Python Perspective

For a Tech Lead, the value lies in automation. Once your local model is running via Ollama, you can point your existing Python scripts to localhost instead of an expensive OpenAI endpoint.

Python

import requests

def private_ai_query(task_description):

# Your local server address

url = "http://localhost:11434/api/generate"

data = {

"model": "mistral",

"prompt": f"Act as a Senior PM. Task: {task_description}",

"stream": False

}

response = requests.post(url, json=data)

return response.json().get('response')

# Example: Automating a project status summary

print(private_ai_query("Summarize the last 5 Jira tickets for the Phoenix Project."))

Best Practices & Common Pitfalls

- Start Small: Don’t try to run a 70-billion parameter model on a standard laptop. Start with an 8B model (like Llama 3) (…) verify the speed, then scale up.

- VRAM is King: If you are buying hardware, prioritize the GPU memory over the CPU speed.

- Use RAG for Context: Don’t try to “train” a model on your data. Use Retrieval-Augmented Generation (RAG) to let the model read your local PDFs and docs in real-time.

The Verdict

Local LLMs have matured. In 2026 (…) they are no longer just for researchers. They are a practical, secure, and cost-effective way for tech-forward companies to harness AI without sacrificing their data privacy.

Whether you choose the CLI power of Ollama or the visual ease of LM Studio, the best time to start building your private AI lab is now.

- Executive Guide to Local LLMs Build Your Private AI - April 25, 2026

- HomeLab A Private IT Environment for Learning and Innovation - April 25, 2026

- PowerShell CMD Windows Terminal Differences and Essential Commands - April 24, 2026