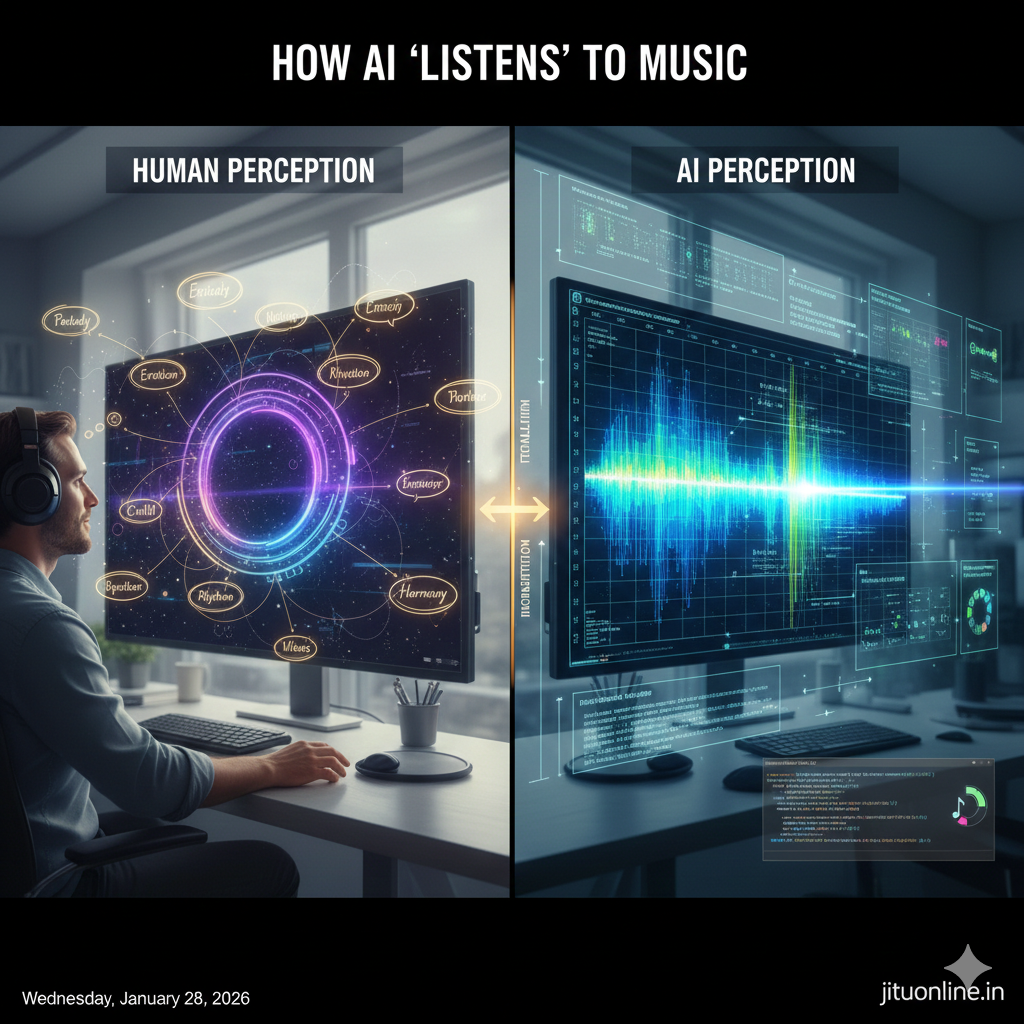

Close your eyes… imagine a melody drifting through the air—a piano’s gentle rhythm, a singer’s warm tone, perhaps the faint echo of strings behind it. To us, this is emotion and art. But to an AI, listening is an act of decoding… a high-speed translation of vibration into vision.

While we “feel” the beat, the machine solves it like a mathematical puzzle. Here is how AI turns waves of sound into structured understanding.

1. The Symphony of Numbers

Every song begins as a physical vibration. When an AI “hears,” those vibrations are captured as a stream of numbers—amplitudes recorded thousands of times every second.

However, raw sound (the Waveform) is incredibly messy. If a drum and a piano play at the same time, their waves squash together into one chaotic line. To make sense of it, the AI uses a math trick called a Fourier Transform. It slices the sound into tiny milliseconds and converts them into a Spectrogram… essentially turning the sound into a picture.

- The Vision: High notes (whistles) appear at the top of the map; low notes (bass) appear at the bottom.

- The Result: The AI doesn’t “hear” the song… it “sees” it as a heatmap of energy.

2. Layered Detective Work in Sound

Just as an AI deciphers pixels to find a face, listening AIs perform their own layered detective work on this “sound-map.”

- The Pulse Detectives: The first layers pick up rhythm and volume… the basic heartbeat of the track.

- The Texture Detectives: Deeper layers separate frequencies. They isolate the “soulful” texture of a human voice from the “solid” block of a digital piano.

- The Story Detectives: Higher layers detect patterns over time… recognizing the repeating chorus or the emotional rise before the bridge.

Bit by bit, the machine learns to listen not with ears… but with structured curiosity.

3. The Latent Space of Music

Now, imagine the AI’s mental library—a vast, 3D world where every musical concept coexists. This is the Latent Space.

In this library, every song has a GPS coordinate based on its “essence.”

- Jazz might float near Blues.

- Lo-fi beats live near Ambient waves.

- Energetic anthems are mapped far away from Soothing lullabies.

When the AI encounters a new song, it doesn’t “guess” the genre. It calculates exactly where that song sits in this 3D landscape of harmony and resonance. It knows the difference between a sad song and a happy one not because it “feels,” but because it has mapped their mathematical architecture.

The Executive Summary

| The Experience | The AI Logic | The Result |

| Melody | Frequency Mapping | Pattern Recognition |

| Rhythm | Temporal Analysis | Structural Alignment |

| Emotion | Latent Space Mapping | Contextual Meaning |

The Bottom Line

AI doesn’t truly hear… it interprets. It understands that sound is more than just vibration; it is intent and structure woven into data. By extracting meaning from frequencies and organizing them in a conceptual space, AI participates in a unique conversation between mathematics and melody.

Every note is both a number… and a story.

- Executive Guide to Local LLMs Build Your Private AI - April 25, 2026

- HomeLab A Private IT Environment for Learning and Innovation - April 25, 2026

- PowerShell CMD Windows Terminal Differences and Essential Commands - April 24, 2026